Rethinking Docker: Development Environments, Linux Overhead, and Redis in Practice

A practical reassessment of Docker across local development, Docker Desktop overhead, Linux server performance, and a small Redis deployment with Compose.

My first real use of Docker was about development environment consistency. Some older projects depended on specific Node.js versions, JDKs, Maven, MySQL, and a pile of local assumptions. Move the project to another machine, and it might fail before the first line of business code ran.

Docker was attractive because the promise was simple: put the runtime environment into configuration, and let someone start the project with one command after cloning the repository.

Chinese version of this article

Later, after using Docker Desktop on macOS and Windows for a long time, I formed another impression: Docker felt heavy. Starting Docker Desktop could spin up the fan, memory usage went up, file watching sometimes became unreliable, and hot reload could become slower than expected.

That impression made me hesitate to use Docker on small Linux servers. If a machine only has 1 core and 2 GB of memory, would Docker waste too much of it?

That changed when I needed to deploy Redis on a lightweight server for configuration synchronization. Putting the old development experience and the server deployment experience side by side made the conclusion much clearer: Docker’s cost depends heavily on where and how it is used. Docker Desktop on a laptop, Docker Engine on a Linux server, Compose for local development, and a Redis container in production are related, but they are not the same problem.

What Docker Solves in Development

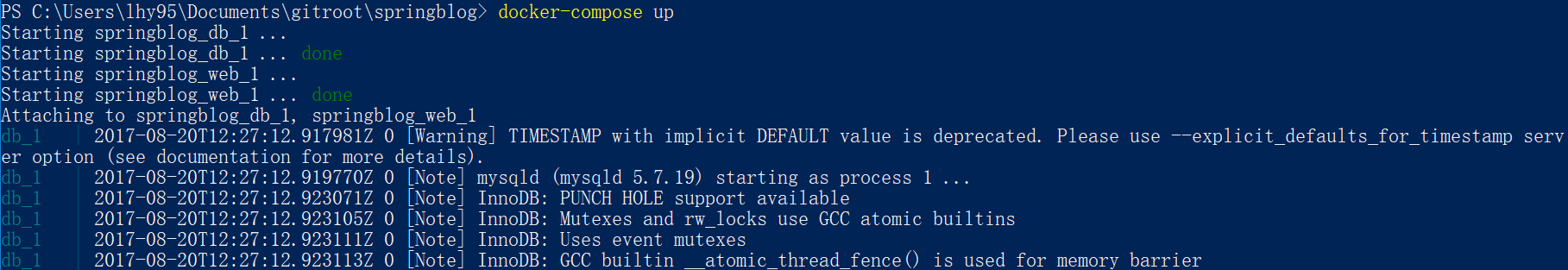

In 2017, I used Docker on a small Spring Boot demo. The problem was very typical:

- The project needed JDK 1.8 and Maven.

- The backend depended on MySQL.

- Developers used different operating systems.

- A README asking people to install everything manually was fragile.

The goal was straightforward: after cloning the project, nobody should need to install MySQL locally, ask for database credentials, or guess initialization steps. A single docker-compose up should bring the project to a working state.

That idea is still valuable today. Docker is a good fit for moving these things out of a developer’s personal machine:

- Databases such as MySQL, PostgreSQL, and Redis.

- Middleware such as message queues, search engines, and object storage emulators.

- Backend services that need fixed system dependencies.

- Network relationships between services.

- Initialization scripts, test data, and local port mappings.

That old demo used one container for the Spring Boot service and another for MySQL, then used Compose to start them together. Opening localhost:8080 in a browser showed the API result.

In this kind of workflow, Docker solves reproducibility. It is not just about saving installation time. It turns environment knowledge that used to be passed around verbally into configuration committed to the repository.

The Local Development Trap: Filesystems and Hot Reload

A development environment is not finished just because the containers start. Frontend projects also depend on hot reload, file watching, dependency installation, and a lot of small file I/O.

I later hit a problem on Docker for Windows: a React project lived on the Windows filesystem and was mounted into a container. The page started correctly, but editing a file did not trigger webpack recompilation inside the container. The file content was synchronized, but filesystem change notifications were not reliably propagated.

At the time, the workaround was to run an extra watcher that translated Windows-side file changes into updates the Linux container could detect. It solved that specific problem, but it is not a default approach I would recommend today.

A more practical default today is:

- On Windows, use WSL2 and keep the project inside the Linux distribution’s filesystem instead of mounting it from a Windows drive.

- On macOS, if hot reload or small file I/O becomes slow, reduce the bind-mounted area and use named volumes for dependency directories when possible.

- If a frontend tool must watch files across the host/container boundary, polling can help, but it increases CPU usage.

- Pure frontend projects do not always need to put every development command inside a container. Local Node.js plus containerized middleware is often a better balance.

Docker Desktop’s WSL2 documentation also emphasizes the Linux distribution filesystem path for better development performance on Windows. That matches the lesson from the older issue.

So Docker on a development machine is a tool, not a doctrine. It is excellent for databases, middleware, and backend dependencies. For frontend hot reload and heavy file watching, the filesystem boundary needs to be part of the design.

Why Docker Is Much Lighter on Linux Servers

My old impression that Docker was heavy mostly came from macOS and Windows. Those systems do not run Linux containers directly, so Docker Desktop has to provide a Linux environment behind the scenes.

On Windows, Docker Desktop commonly uses the WSL2 backend. On macOS, it also needs a Linux virtualization environment to host containers. A large part of the perceived overhead comes from that virtualized layer and from filesystem mapping between the host and the Linux environment.

Docker Engine on a Linux server is different. Docker’s documentation describes containers as processes running on the host, isolated with their own filesystem, networking, and process tree. The isolation relies mainly on Linux kernel features:

- namespaces isolate process, network, mount, hostname, and other views.

- cgroups limit and account for CPU, memory, I/O, and other resources.

- union filesystems such as overlayfs combine image layers with a writable container layer.

That means a container on Linux is not a full virtual machine. It still has overhead, but it is usually far smaller than running one full VM per service.

IBM Research reached a similar conclusion in its container performance paper: Linux containers were close to bare-metal performance in many CPU, memory, and network benchmarks. There were still cases where storage drivers, I/O paths, or networking choices mattered, so the lesson is not “Docker is always free”. The better lesson is that Docker Desktop’s laptop experience should not be projected directly onto Linux server deployments.

A clearer distinction is:

- Docker Desktop is a developer experience tool. It is convenient, but it includes virtualization and filesystem mapping costs.

- Docker Engine on Linux is a server runtime. It uses Linux kernel capabilities directly and is suitable for lightweight services.

- Docker Desktop for Linux also runs a VM, so it is not the same thing as installing Docker Engine directly on a server.

That distinction is what made me comfortable running Redis in Docker on a small Linux server.

Linux Still Has Costs

Running Docker on Linux does not mean resources can be ignored.

Several costs still exist:

- Images and container layers use disk space, so unused images need cleanup.

- Logs may grow under Docker’s data directory unless log rotation is configured.

- Bridge networking and port publishing add some network overhead.

- overlayfs is not always ideal for write-heavy workloads.

- bind mounts, volumes, permissions, and UID/GID mapping need attention.

- Containers do not automatically limit memory by default, so a runaway service can still pressure the host.

The right conclusion is not “Docker is light, so anything goes”. The right conclusion is to give services clear boundaries: memory limits, log limits, persistent data, and explicit port exposure.

Installing Docker Engine on Ubuntu

On a server, Docker Engine is the right target, not Docker Desktop. Docker’s official Ubuntu installation guide uses its apt repository, and the exact commands may evolve over time, so the official page should be treated as the long-term source of truth.

A common installation flow looks like this:

sudo apt-get update

sudo apt-get install -y ca-certificates curl

sudo install -m 0755 -d /etc/apt/keyrings

sudo curl -fsSL https://download.docker.com/linux/ubuntu/gpg -o /etc/apt/keyrings/docker.asc

sudo chmod a+r /etc/apt/keyrings/docker.asc

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.asc] https://download.docker.com/linux/ubuntu \

$(. /etc/os-release && echo "${UBUNTU_CODENAME:-$VERSION_CODENAME}") stable" | \

sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

sudo apt-get update

sudo apt-get install -y docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

Then enable and check Docker:

sudo systemctl enable --now docker

docker --version

docker compose version

If you do not want to type sudo for every Docker command, you can add the current user to the docker group:

sudo usermod -aG docker "$USER"

This requires a new login session to take effect. It is also a security decision: access to the Docker socket is effectively close to root-level control over the host, so it should not be granted casually.

Running a Constrained Redis with Compose

My concrete use case was a small Redis instance for configuration synchronization. Redis is a good Docker example: the image is mature, startup is fast, resource usage is low, and it still forces you to think about ports, persistence, memory limits, and security.

Create a directory:

mkdir -p ~/services/redis-config/data

cd ~/services/redis-config

Create .env:

REDIS_PASSWORD=change-this-password

Create compose.yaml:

services:

redis:

image: redis:8-alpine

container_name: redis-config

restart: unless-stopped

ports:

- "127.0.0.1:6379:6379"

command:

- redis-server

- --appendonly

- "yes"

- --maxmemory

- "64mb"

- --maxmemory-policy

- allkeys-lru

- --requirepass

- "${REDIS_PASSWORD:?set REDIS_PASSWORD}"

volumes:

- ./data:/data

mem_limit: 128m

Several choices matter here:

redis:8-alpinepins the major Redis version and avoids long-term reliance onlatest.- The port is bound to

127.0.0.1, so it is only reachable from the same host by default. - AOF is enabled so data is persisted under the mounted data directory.

- Redis has its own

maxmemoryand eviction policy. - The container has a memory limit, so Redis or an abnormal process cannot consume the whole server.

restart: unless-stoppedbrings the service back after a reboot.

If Redis is only used by applications on the same host, binding to 127.0.0.1 is the safer default. If it needs to be accessed from another server or used for replication, bind it to an internal network address and restrict the source addresses with the cloud provider’s security group. Do not expose Redis directly to the public internet.

Docker’s firewall documentation also calls out an easily missed detail: published container ports can bypass parts of host-level ufw or firewalld expectations. On cloud servers, it is better to combine security groups, internal IP bindings, and service-level authentication instead of relying on the local firewall alone.

Start it:

docker compose up -d

docker compose ps

Verify it:

docker compose exec redis redis-cli

Then run:

AUTH change-this-password

PING

PONG means Redis is working.

Check resource usage:

docker stats redis-config --no-stream

free -h

To inspect Redis memory usage from Redis itself:

AUTH change-this-password

INFO memory

On my lightweight server, an idle Redis container used only a few to a dozen megabytes of memory, and CPU usage was almost negligible. The exact number depends on Redis version, data volume, configuration, and host environment, but the order of magnitude is enough to show that running a small Redis container on a low-end Linux server is reasonable.

When Docker Is a Good Fit

Putting these experiences together made my Docker judgment more concrete.

Docker is a good fit when:

- A development environment needs consistent databases, middleware, or backend dependencies.

- A server needs lightweight services without polluting the host with manual installs.

- Several services need explicit network relationships, startup behavior, and environment variables.

- Runtime versions should be pinned by images.

- A service should be easy to move to another Linux machine.

Docker needs more caution when:

- A frontend project on macOS or Windows depends heavily on file watching and hot reload.

- A database has heavy writes and needs careful disk, volume, backup, and recovery planning.

- The server has extremely limited memory, such as 512 MB, while running multiple services.

- The setup is only a

docker runcommand without log, persistence, security, or upgrade planning. - Containers are treated as a hard security boundary that cannot affect the host.

Docker’s best role is to turn runtime environment into code while giving services clear operational boundaries. It should not replace every local development tool, and it should not hide deployment design.

A Safer Default Practice

For personal servers or small projects, my default Docker practice is:

- Install Docker Engine on Linux servers, not Docker Desktop.

- Use

docker composefor services instead of keeping longdocker runcommands in notes. - Pin image major versions, such as

redis:8-alpine, instead of depending onlatestforever. - Store data in explicit volumes or host directories.

- Set restart policies and memory limits for containers.

- Bind service ports to

127.0.0.1or internal IPs by default. - Put Nginx, Caddy, or another gateway in front when public access is needed.

- Do not expose database-like services directly to the public internet.

- Check

docker ps,docker stats,docker logs, and disk usage regularly. - Read release notes before image upgrades and keep a rollback path.

- Back up important data at the host or storage level; a running container is not a backup.

This is not complicated, but it prevents many cases where a container starts easily and becomes hard to maintain later.

Conclusion

My understanding of Docker has gone through three stages.

At first, Docker was a development environment tool: Compose could encode JDK, MySQL, backend services, and network relationships, reducing project startup cost.

Then Docker Desktop on macOS and Windows made Docker feel heavy, especially around filesystems, hot reload, and memory usage.

Later, running Redis on a Linux server made the distinction clearer: Docker Engine on Linux should not be judged by Docker Desktop’s laptop overhead. For lightweight services, Docker on Linux is practical, as long as persistence, resource limits, and security boundaries are designed properly.

Docker is neither a synonym for performance overhead nor a universal deployment answer. It is a reproducible runtime description. Used with restraint and clear boundaries, it fits personal projects and small services very well.